Technology can be a wonderful thing. The GPS tech in our smartphones can effortlessly direct us to almost any location. Password managing software helps lessen the frustration of remembering a bazillion different eight-character inputs.

But the same technology that keeps our luggage from being misplaced at the airport and helps us keep track of our wayward children can also be used for nefarious and even deadly purposes, especially in situations that involve stalkers and what’s known as intimate partner violence (IPV). And in some cases, the victim may have little to no idea that this seemingly innocuous technology is being used to stalk, control and potentially harm them.

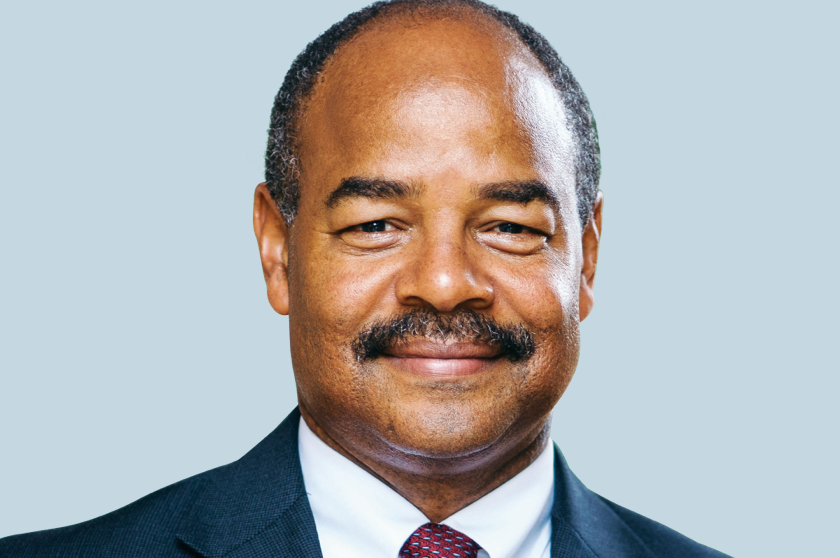

Technology’s troubling relationship to IPV was something Rahul Chatterjee, an assistant professor of computer sciences with the School of Computer, Data & Information Sciences (CDIS), first discovered as a graduate student at Cornell University.

“In security research, we assume attackers and hackers are everywhere,” says Chatterjee. “But we also assume that they don’t know the victim personally. With intimate partner violence, that very basic assumption is broken. And, therefore, all the security mechanisms that we have built in the last 30 years are ineffective.”

The Centers for Disease Control estimate that one in every four women experiences IPV, with numbers trending even higher in the Black and transgender communities. And many of those cases involve abuse of technology.

At Cornell, Chatterjee and his colleagues began digging into mobile spyware, or stalkerware as it’s also known. Some of the problems were obvious—phone-tracking mobile applications like mSpy and FlexiSPY that can do everything from raiding call logs to taking photos and recording audio of the user. But others were far more subtle. Google Maps, for instance, includes a location tracking feature, as does the app Find My iPhone.

“Those applications are at least on the face of it designed for some legitimate use, but they can easily be abused to spy on and stalk victims,” says Chatterjee. “Most of these applications don’t have any protection from being used against an intimate partner.”

In response to his findings, Chatterjee helped create a tech clinic at Cornell, a resource that victims of IPV could access to learn more about spyware and how to safely disable or remove it without alerting their stalker. He’s recreated the model at UW-Madison, setting up a tech clinic (techclinic.cs.wisc.edu) to help victims in the Madison area.

In security research, we assume attackers and hackers … don’t know the victim personally. With intimate partner violence, that very basic assumption is broken.

The clinic website is not yet intended for public use, says Chatterjee. Clients who wish to access the clinic’s services must first contact and work with Madison-based Domestic Abuse Intervention Services (DAIS) or one of several other organizations with whom Chatterjee’s group has partnered.

Currently, Chatterjee’s clinic is staffed by students, many of whom are volunteering their time to help victims. The students are experts in technology and are trained in trauma-informed care, but they are not experienced in managing the tricky nuances of an abusive relationship, which is why the clinic relies on DAIS and its other partners to help provide effective advice to those who need it. Clients of the clinic are required to be in the room with the clinic volunteers and an advocate to avoid potentially volatile situations.

Creating awareness is typically the first goal—in many cases, the victims only suspect the use of spyware, possibly because an abuser showed up unexpectedly at a restaurant or workplace or discussed information that could only have been gleaned from a smartphone. If Chatterjee’s team does find spyware or a potentially suspicious setting on a phone, they will show the victim how to mitigate the concern by, for example, removing or disabling the offending software or helping them change their passwords to something their stalker is unlikely to know or guess. In some cases, Chatterjee’s team won’t change anything or leave any traces that the phone has been scanned, giving the victim a modicum of plausible deniability.

“Some of these situations can be seriously risky,” says Chatterjee. “In some cases, if they change the password, the abuser will discover it and it can escalate the violence. We have to be cautious, and, therefore, we always inform clients about the risks.”

In the classes he teaches at UW–Madison, Chatterjee often discusses the concept of threat modeling, the understanding of how software systems can be broken—and who might be able to break them. The hacker in question might be in a faraway land. Or they might be in the same house with you.

“I have a small hope that the people who are graduating from my classes will at least think twice before they create a ‘feature’ on their side system,” says Chatterjee. “They will step back and ask themselves first, ‘Can this feature be abused or used to take advantage of certain vulnerable populations?’”